In 2025, data engineering has emerged as one of the most critical and sought-after roles in the tech industry, especially at elite companies collectively known as FAANG (Facebook/Meta, Amazon, Apple, Netflix, Google) and their peers.

As businesses are increasingly driven by data, the demand for highly skilled data engineers who can design, build, and optimize the complex data infrastructures required for analytics, artificial intelligence, and business intelligence has skyrocketed.

Data engineers form the backbone of any data-driven company by creating reliable, scalable data pipelines and architectures that enable data scientists, analysts, and decision-makers to extract meaningful insights from vast amounts of raw data. Unlike data scientists who focus more on modeling and analytics or data analysts who interpret data, data engineers build and maintain the infrastructure that makes these activities possible.

For anyone aiming to land a data engineering role at a FAANG company, understanding the evolving technological landscape, mastering sophisticated tools, and strategically preparing for rigorous FAANG interviews are essential. This guide delves deep into what data engineering entails today, the skills you need, the roadmap to FAANG success, and how artificial intelligence is shaping the future of data engineering roles.

Key Takeaways

- Master advanced SQL, Spark or Flink, and cloud warehouses to design scalable batch and streaming data platforms for FAANG-level demands.

- Use Airflow and dbt for robust orchestration, testing, lineage, and governed ELT that meets strict SLAs and compliance needs.

- Apply star or snowflake modeling with SCD Type 2, balancing normalization and performance for analytics and ML readiness.

- Build AI-aware pipelines leveraging GenAI and LLMOps patterns like RAG, prompt/version control, caching, and real-time observability.

- Showcase end-to-end portfolio projects with measurable latency, reliability, and cost improvements to signal platform ownership.

- Prepare for interviews by drilling SQL and Python, Spark internals, and system design with clear, quantified trade-off narratives.

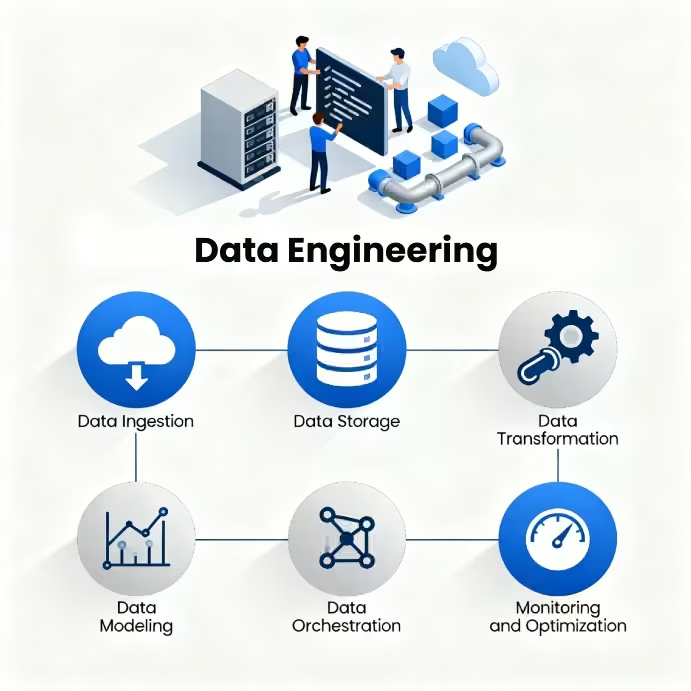

What is Data Engineering?

Data engineering is all about building and maintaining the systems that keep an organization’s data flowing smoothly. It involves designing the infrastructure that gathers information from different sources, stores it securely, and transforms it into a usable form. In a data-driven business, this discipline lays the groundwork for everything else.

Data engineering ensures that raw data is cleaned, organized, and easily accessible for analytics, reporting, or machine learning. Without strong data engineering, even the best insights or algorithms wouldn’t have reliable data to stand on.

A data engineer’s main job is to design and maintain data pipelines and automated systems that move information from its original sources to storage solutions like data warehouses or data lakes. These pipelines are the backbone of analytics and decision-making, ensuring that data flows smoothly and efficiently across the organization.

Along the way, they handle processes like Extract, Transform, Load (ETL) or Extract, Load, Transform (ELT), making sure the data that reaches analysts and data scientists is accurate, consistent, and ready to use.

The role requires deep technical expertise in:

- Programming languages like Python and Java, essential for scripting and creating scalable data applications.

- Advanced SQL for querying relational databases and optimizing data retrieval.

- Distributed data processing frameworks such as Apache Spark and Hadoop for handling massive datasets.

- Real-time data streaming technologies like Apache Kafka and Apache Flink, enabling continuous data ingestion and processing.

- Cloud platforms like AWS, Azure, or Google Cloud that provide scalable storage (e.g., S3, BigQuery) and processing services.

Data engineers also embrace modern practices such as data orchestration with tools like Apache Airflow, infrastructure as code (Terraform), and automated testing and monitoring of data workflows.

In essence, data engineering bridges the gap between raw data and usable insights, enabling data scientists, analysts, and business teams to make data-driven decisions without worrying about the complexities of data handling.

As FAANG companies handle vast and complex datasets, data engineers in these organizations are expected to master scalable distributed systems, cloud-native architectures, and cutting-edge pipeline orchestration tools, while ensuring data quality, governance, and security.

Roles and Responsibilities of Data Engineers

A data engineer’s core mission is to design, build, and maintain the systems that keep data reliable, scalable, and accessible across an organization. Their work underpins analytics, product development, and machine learning initiatives, essentially turning raw data into a strategic asset.

At FAANG companies, this role is deeply technical, centered on scalability, performance, and innovation at extraordinary levels of complexity. Data engineers here are not just managing data, they’re also engineering the pipelines and architectures that make global-scale intelligence possible.

Key Responsibilities

- Designing and Managing Data Architecture: Developing scalable databases, data lakes, and modern cloud-native storage systems across AWS, Google Cloud, or Azure environments.

- Building Data Pipelines: Creating automated ETL and ELT workflows using tools like Apache Airflow and dbt to ensure data is timely, accurate, and analytics-ready.

- Optimizing Data Processing: Utilizing distributed systems such as Hadoop and Spark, along with real-time frameworks like Kafka or Flink, to process enormous volumes of data everyday, which is an everyday necessity in FAANG ecosystems.

- Cloud Systems Integration: Harnessing cloud data platforms such as Redshift, BigQuery, or Snowflake to balance performance, reliability, and cost-effectiveness.

- Data Quality and Governance: Establishing robust validation, monitoring, and logging frameworks to safeguard data integrity, security, and regulatory compliance (GDPR and beyond).

- Database Optimization: Applying advanced SQL and NoSQL techniques like indexing, partitioning, window functions, to deliver high-performance queries and ensure system resilience.

- DevOps and Automation: Implementing Infrastructure-as-Code (Terraform), CI/CD workflows (Jenkins, GitHub Actions), and automated testing to support rapid, reliable deployment cycles.

- Cross-Team Collaboration: Partnering closely with data scientists, software engineers, and business stakeholders to design adaptable, high-impact data solutions that align with both analytical and operational goals.

Day-to-Day tasks may include:

- Developing and deploying new pipelines to onboard data or add features.

- Monitoring existing workflows for failures, performance drops, or anomalies.

- Working with domain teams to model data (star/snowflake schemas, fact/dimension tables) for specific product needs.

- Troubleshooting distributed compute clusters, scaling resources, and managing infrastructure costs.

Data engineers at FAANG and other leading tech companies apply software engineering principles to data systems, combining both skill sets to build reliable, scalable, and efficient data platforms that support products and analytics on a global scale.

Essential Data Engineering Skills

Becoming a data engineer at a FAANG company in 2025 demands more than just familiarity with data tools, as it requires depth, mastery, and adaptability across a suite of advanced technologies and methodologies. Here’s what you need to focus on.

Programming Languages:

Mastery of Python is highly valued for scripting, data manipulation (Pandas), and distributed processing (PySpark). Java (and sometimes Scala) is vital for building high-performance backend data systems at scale.

SQL Expertise:

Beyond basic CRUD, advanced SQL topics like window functions, subqueries, indexing, and query optimization are absolutely crucial. FAANG interviews often include highly complex SQL challenges, requiring you to join, aggregate, and analyze massive datasets efficiently.

Big Data Frameworks

Deep experience with distributed processing using tools like Apache Spark (for real-time and batch in-memory computation) and Hadoop (for massive disk-based processing). An understanding of partitioning, shuffling, and optimizing for scale is expected.

Real-Time Data Streaming

For data engineering, proficiency with tools like Apache Kafka and Apache Flink to support event-driven, real-time analytics is an important technical skill to have at companies like Netflix and Meta.

NoSQL and Modern Data Storage

FAANG engineers work with both SQL and NoSQL systems like DynamoDB, MongoDB, Redis, and Cassandra for elastic and specialized data storage needs. Apart from having a technical knowledge of data storage tools, you must also understand which data model fits specific scenarios.

Cloud Platforms

Expertise in one or more major cloud providers like AWS (S3, Glue, Redshift, Lambda), Google Cloud (BigQuery, Dataflow), Azure (Data Lake, Synapse). Databricks and Snowflake are also popular platforms that are gaining immense popularity.

ETL/ELT and Orchestration

Building robust, automated data pipelines using Airflow, DBT, Prefect, or Dagster. Knowledge of ELT (transforming data in-warehouse) is now critical, not just traditional ETL.

DevOps and Infrastructure-as-Code

Ability to use Terraform or CloudFormation for automated resource provisioning. Experience with CI/CD pipelines (Jenkins, GitHub Actions) to test, deploy, and monitor data workflows.

Data Modeling and Warehousing

Ability to design star/snowflake schemas, dimension/fact tables, and apply normalization vs. denormalization, with a focus on performance and scalability.

Modern and Advanced Areas

AI/ML Data Pipelines

Increasingly, data engineers are expected to support machine learning workflows—serving feature stores, managing large unstructured datasets, and enabling model retraining.

Data Governance and Quality

Implementing tools for data validation, monitoring, lineage, observability, and enforcing compliance (GDPR, CCPA).

Communication and Collaboration

For a data engineer, soft skills matter too. Data engineers must be adept at working with cross-functional teams, including software engineers, data scientists, and product managers, which requires good communication skills and collaboration.

What Sets Apart a FAANG Data Engineer in 2025?

What truly sets a FAANG data engineer apart in 2025 is their ability to think far beyond just building data pipelines. They’re experts at working with petabyte-scale datasets, making sure systems run reliably, quickly, and without wasting resources.

Their strong foundation in system design means they’re able to design complex distributed data platforms that can handle huge amounts of information with minimal downtime. Importantly, success in this field also means embracing a mindset of continuous learning.

New tools, frameworks, and practices like data mesh, lakehouse architectures, or generative AI keep reshaping the landscape. FAANG Data Engineers stay on top by adapting swiftly and always expanding their skill set. It’s this blend of technical depth, big-picture thinking, and constant growth that defines the next generation of top data engineers.

Also Read: The Data Engineer Roadmap for 2025: How AI Is Redefining the Role

How to Transition to FAANG Data Engineer in 2025

Cracking into a FAANG company as a data engineer might not be easy, but with a proper, practical plan, you can build upon your existing skills and transition into a FAANG role. Preparing for FAANG requires a clear, structured learning path and, more importantly, consistent action.

FAANG companies want data engineers who don’t just know theory, but can apply their skills to solve real-world, complex data problems at scale. Here’s a structured roadmap for an FAANG Data Engineer.

Step 1: Advance Your Programming Skills

If you’re an experienced data engineer, moving to a FAANG role means taking your coding skills far beyond daily scripting or basic ETL automation. FAANG teams expect you to write production-quality, modular, and efficient code, primarily in Python and Java, but also often in Scala for some backend and big data applications.

Python stands out because of its readability, versatility, and rich open-source ecosystem. You should be highly proficient with advanced features of Python, such as decorators, generators, context managers, and multi-threading or multiprocessing for concurrent workloads. Working with data at scale means you’ll be leveraging libraries like Pandas for complex data wrangling and PySpark for distributed computing.

Java (and sometimes Scala) becomes critical for building back-end data platforms that handle FAANG-level throughput and concurrency. Here, you should demonstrate knowledge of JVM optimizations, memory management, and the ability to debug and tune large-scale batch and streaming data jobs.

It’s also essential to treat code as a product. This means clean architecture, rigorous error handling, solid logging, and automated unit/integration tests in every pipeline. Code reviews and collaboration are a given, as FAANG expects engineers to contribute to and critique massive codebases that operate at a global scale.

Beyond pure programming, a FAANG-level data engineer is continuously automating repetitive tasks, optimizing scripts for performance, and integrating with APIs and service layers across cloud and hybrid environments. The goal is not just to make data pipelines work, but to make them efficient, reusable, and future-proof.

To stand out, you need to demonstrate not only how to write robust code, but also how to architect, test, optimize, and maintain it as part of a larger system. This depth and maturity in programming is an explicit expectation at FAANG and a clear differentiator in their hiring process.

Step 2: Learn Advanced SQL & Query Optimization

For data engineers stepping up to FAANG, mastery of advanced SQL is non-negotiable. At this level, you’re expected to design, optimize, and debug complex queries that operate on massive, high-velocity datasets, which are sometimes spread across distributed architectures and cloud warehouses.

Complex Joins and Window Functions

FAANG problem statements require not just simple joins, but multi-table joins, self-joins, and deep use of window functions (ROW_NUMBER, RANK, LAG/LEAD) to solve complex analytics scenarios.

For example, you may need to segment users by activity, calculate moving averages, or deduplicate large event streams.

CTEs, Subqueries, and Indexing

You’ll frequently employ Common Table Expressions (WITH clauses) and nested subqueries for readability and modularity. Understanding how to index and partition data is critical for reducing query latency when scanning billions of rows.

Performance Optimization Techniques

At scale, the cost of inefficient SQL multiplies. Inefficient SQL might be tolerable in small systems, but as you scale up, it can drain performance and money fast.

You need to apply strategies like predicate pushdown, query plan analysis, managing NULLs, and CASE statements to optimize speed and cost. FAANG interviews often give scenarios where you must refactor or debug a slow query on terabytes of data.

Real Business Scenarios

FAANG SQL problems are never purely theoretical. You’re likely to face case studies such as analyzing engagement on social platforms, querying user journeys across e-commerce data, or working with streaming service logs to extract timelines and trends.

Cloud Warehouses

FAANG engineers work with Redshift, BigQuery, and Snowflake, each with unique optimization strategies. Understanding columnar storage, federated queries, compute scaling, and serverless warehouse features is essential.

How to Practice advanced SQL and Query optimization?

- Solve real FAANG-style SQL challenges on platforms like LeetCode and StrataScratch, focusing on query optimization and business logic.

- Build, optimize, and document queries on large public datasets (Google BigQuery, Kaggle).

- Actively review execution plans and optimize for both speed and resource usage.

Transitioning to FAANG means moving from “just writing queries” to architecting reproducible, highly performant SQL workflows, backed by deep business understanding and cloud scalability. This skill is rigorously tested and even more crucial in production, where poorly written SQL can stall entire product pipelines or inflate cloud costs.

Step 3: Data Modeling & Warehousing for Scale

Transitioning to a FAANG data engineering role requires you to operate far beyond basic schema design or ad hoc reporting tables. You need to show that you can design data systems that scale, not just write SQL.

You also need to understand complex business problems and turn them into well-structured, efficient data models that can power large-scale analytics and machine learning.

Here are some key focus areas.

Star and Snowflake Schemas

Know when to use each: Star schemas for simplicity and performance, snowflake for normalization and flexible reporting. Design dimension tables (for user, product, time, etc.) and central fact tables (transactions, events) that capture business logic and enable efficient joins for massive query loads.

OLTP vs OLAP

Understand the trade-offs between transactional (OLTP) databases and analytical (OLAP) warehouses. Design solutions that fit streaming, batch, and hybrid workloads, with a close eye on concurrency, cost, and latency.

Handling Slowly Changing Dimensions (SCD)

Master strategies for preserving historical changes: Type 1 (overwrite), Type 2 (add row, track history), Type 3 (add columns for limited history). This is especially critical for FAANG, where long-term product or customer analysis is the norm.

Data Warehouse Solutions

Be hands-on with Redshift, BigQuery, and Snowflake. Learn how to leverage features like columnar storage, separation of compute and storage, automatic clustering, partitioning, query parallelization, and pricing optimizations.

Normalization vs Denormalization

Understand how to strike a balance between normalization and denormalization. Normalize for data integrity but denormalize for performance in analytic workflows. It is also important to understand your own approach, as FAANG interviews often want to understand your reasoning along with your design.

Source-to-Target Mapping

Design clear mappings and transformation logic for complex ingestion processes, handling data from various sources, varying formats, and evolving schema requirements.

At the FAANG level, your models must support diverse analytics, reporting, and ML use cases with minimal downtime or rework. You’ll be working with domain-driven teams, designing flexible models that support both rapid experimentation and rigorous compliance.

For example, you might be asked to design a schema for a multi-tenant product analytics platform, laying out fact tables to support billions of daily events, with dimensions to analyze flows, segments, or behaviors across various user hierarchies and geographies.

As a FAANG Data Engineer, you will architect high-performance, cost-effective, and future-proof data platforms that become the nervous system for all major product and business decisions at scale.

Step 4: Build End-to-End Distributed Data Platforms (Spark & Hadoop)

For experienced data engineers targeting FAANG, you must move from running ETL jobs on a single system to architecting and managing distributed data platforms built for massive scale. FAANG-scale data requires frameworks that can process terabytes to petabytes daily, reliably, quickly, and under stringent latency and cost pressures.

Apache Spark

Spark is the industry standard for high-velocity, in-memory data processing across both batch and streaming use cases. To succeed at FAANG, you must:

- Master the distinctions between RDDs, DataFrames, and Datasets.

- Use Spark SQL for distributed analytics, and develop performant pipelines using transformations, map-reduce, joins, aggregation, and UDFs.

- Understand Spark’s lazy evaluation, directed acyclic graph (DAG) execution model, caching, checkpointing, and optimization strategies like broadcast joins and skew handling.

- Leverage Structured Streaming in Spark for real-time use cases.

Hadoop

Hadoop underpins large-scale data storage and disk-based processing, and is foundational for legacy and hybrid cloud pipelines. You should understand:

- Distributed storage with HDFS

- Batch job orchestration with YARN and MapReduce

- When to choose Hadoop (disk-based, large but infrequent jobs) over Spark (in-memory, low latency)

Cluster Sizing, Resource Management, and Performance

At FAANG, you’ll be expected to size clusters, allocate resources efficiently, and monitor/optimize pipelines to handle dynamic, global workloads. You need to understand:

- How to select the number/type of cluster nodes based on workload and cost constraints

- Monitoring with Spark UI, Hadoop ResourceManager, Ganglia, or cloud-native dashboards

- Diagnosing failed jobs, tuning partition counts, executor memory, and shuffle operations to meet SLAs on high-throughput jobs.

As a FAANG Data Engineer, you will be building core data infrastructure to power analytics, personalized products, and AI/ML in some of the world’s most data-intensive businesses. Depth in Spark, Hadoop, and the broader distributed ecosystem is a baseline expectation at FAANG.

Step 5: Real-Time Streaming Expertise (Kafka & Flink)

Modern FAANG data platforms are driven by real-time analytics and event-driven architectures, not just batch pipelines. As an experienced data engineer targeting FAANG, you need to demonstrate advanced skills in building streaming systems that can ingest, process, and deliver insights from massive flows of continuous data.

Apache Kafka

Kafka is the backbone of distributed event streaming. At scale, you’ll work with Kafka clusters handling trillions of events per day, enabling microservices and applications to consume data with sub-second latency.

- Understand topics, partitions, replication, and retention policies.

- Design producers/consumers for reliable, fault-tolerant ingestion.

- Integrate Kafka with Spark, Flink, or other analytics engines for end-to-end pipelines.

Apache Flink (and Spark Streaming)

Flink excels at real-time stream processing like supporting event-time windows, stateful computations, and exactly-once delivery guarantees.

- Master window functions, state management, join operations, and operator chaining for complex analytics.

- Design low-latency dashboards, personalized feeds, and real-time anomaly detection.

Event-Driven & Real-Time Use Cases

FAANG Data Engineers build:

- Real-time recommendation engines (Netflix, YouTube)

- Fraud and anomaly detection on payment and activity streams

- Clickstream analytics and dynamic user segmentation for apps at a global scale

Handling Late/Out-of-Order Data & Fault Tolerance

Learn how to manage late-arriving records, watermarking, and state recovery, ensuring data integrity even with network delays or failures.

Monitoring & Scaling

Use distributed monitoring (Prometheus, Grafana), auto-scaling, and alerting to ensure reliability at a global scale. You also need to document SLAs, recovery plans, and performance metrics for end-to-end observability.

Design your systems for exactly-once semantics where possible (minimizing duplicates and data loss). You must always plan for backpressure, retries, and state persistence. Your goal is to ensure that business-critical analytics and user-facing features get the freshest, most accurate data, every second.

At FAANG, real-time streaming has become foundational for live features, operational dashboards, and AI-driven products. Deep skill in Kafka, Flink, and robust streaming system design is a must-have for moving beyond basic batch ETL and contributing at platform scale.

Step 6: Cloud Data Engineering at Scale

FAANG data engineers are expected to architect, deploy, and manage data solutions almost exclusively in cloud environments. If you’re transitioning from an on-premise or hybrid role, mastering the cloud stack is a critical next step. Here is what’s expected of a FAANG Data Engineer.

Deep Specialization in One Cloud Platform

Rather than knowing AWS, GCP, and Azure in passing, build true expertise in one platform’s data products like AWS (S3, Glue, Redshift, Lambda), Google Cloud (BigQuery, Dataflow, PubSub), or Azure (Data Lake, Synapse, HDInsight). Know every major storage and compute tool in detail.

Cloud-Native Data Engineering

Leverage cloud-native frameworks to build scalable, secure, and cost-efficient data lakes, warehouses, and streaming analytics platforms. Use serverless solutions for automation (Lambda, Dataflow, Azure Functions) to reduce operational overhead.

Infrastructure as Code and DevOps

Automate infrastructure using Terraform or CloudFormation. Set up CI/CD pipelines (Jenkins, GitHub Actions, GitLab CI) for continuous integration, deployment, and rollback. Cloud resource provisioning, permission management (IAM/VPC), and automated scaling are default expectations.

Pipeline Monitoring and Cost Optimization

Proactively monitor resource usage, set up alerts, and use dashboards (Prometheus, Grafana, AWS CloudWatch) for visibility into pipeline health. Understand how to optimize costs by choosing the right storage class, spot vs. reserved compute, partitioning data logically, and cleaning up unused assets.

Security, Compliance, and Reliability

Design architectures with security and compliance as a core principle and ensure PII encryption, proper access controls, and auditability. Cloud-based data solutions must be resilient to failures, support automated recovery, and meet SLAs for uptime and latency.

Cloud ETL/ELT Best Practices

You need to master the shifting landscape and move from classic ETL to ELT, leveraging the computational power of cloud data warehouses like BigQuery and Snowflake for in-database transformations. Automate end-to-end extraction pipelines using Apache Airflow, DBT, and native orchestration tools.

In FAANG environments, cloud data engineering is not just about “lifting and shifting” to the cloud, but about architecting platforms that can serve product analytics, machine learning, and real-time insights with global-scale reliability and speed.

For FAANG Data Engineers, cloud proficiency goes far beyond basic workflow knowledge. It requires a combination of deep technical expertise, automation skills, and a sharp focus on reliability, cost, and security.

Step 7: Workflow Orchestration and Automation

At the FAANG level, reliably moving, transforming, and monitoring data across countless systems requires more than manual scripting. It demands enterprise-grade orchestration. This means automating workflows and ensuring robust pipeline management with tools designed for complex dependencies and large production workloads. Here’s what you need to learn.

Apache Airflow

The gold standard for workflow orchestration. Define Directed Acyclic Graphs (DAGs) that sequence, schedule, and monitor pipeline tasks.

- Manage retries, failures, and alerts for resilient data workflows.

- Use Airflow sensors, operators, and hooks to interact with cloud services, databases, and file systems.

- Monitor pipeline health and keep SLAs on data freshness or ingestion accuracy.

DBT (Data Build Tool)

- Increasingly used for in-warehouse transformations, testing, and modularizing SQL logic.

- Version control your models, apply CI/CD workflows, and document lineage.

- Enable robust, auditable transformations directly in the warehouse (Snowflake, BigQuery, Redshift).

Also Read: What is dbt in DATA Engineering?

FAANG teams may also use Prefect, Dagster, and enterprise orchestration platforms for scaling up, integrating with internal systems.

Core Skills:

- Design DAGs that handle conditional dependencies, parallel execution, and backfills.

- Set up logging, alerting, and monitoring (Prometheus, Grafana, CloudWatch) for proactive operational management.

- Orchestrate jobs across cloud-native platforms using airflow-native and vendor-specific operators.

Transitioning to FAANG means moving from basic scheduling tools to sophisticated, resilient workflow orchestration that can power hundreds of production data pipelines, handling real-time triggers, incremental batch jobs, and enterprise-wide dependencies, all with deep monitoring and error handling.

Step 8: System Design for Robust, Scalable, and Secure Data Systems

At FAANG, data engineers are expected to design complex data platforms that are reliable, scalable, and secure across distributed, often global, systems. Here’s what you need to learn.

Distributed Architecture Principles

You must be able to architect platforms handling petabytes of data across clusters, regions, and cloud providers. This means understanding partitioning strategies, sharding, replication, and consensus (e.g., Paxos, Raft).

Batch vs. Streaming Design

Design both batch and streaming (real-time) pipelines, knowing when to use Spark, Hadoop, Kafka, or Flink based on latency, throughput, and business requirements.

Optimal File Formats and Storage

Select and implement file formats (Parquet, Avro, Delta) and storage systems (Data Lake vs. Warehouse vs. Lakehouse) for efficient access, schema evolution, and analytics.

Schema Evolution & Versioning

Build systems that handle frequent schema changes like auto-migration, backward/forward compatibility, and version control to keep systems healthy as business needs evolve.

Data Lineage, Governance, and Cataloging

Ensure you can trace data flow for audit and debug purposes. Leverage tools for cataloging, metadata management, automatic lineage tracking, and governance compliance (GDPR, CCPA).

Security and Privacy

Design with PII protection, row or column-level security, encryption (at rest and in transit), and robust access controls. Be ready to discuss compliance scenarios for global regulatory requirements.

Observability & Monitoring

Build deep monitoring into platforms like logs, metrics, distributed tracing, anomaly detection, using tools like Prometheus, Datadog, or custom dashboards.

Trade-off Analysis

In interviews and on the job, justify decisions on technology choices, consistency/availability, scalability versus cost, and how you adapt architecture to business constraints and SLAs.

Success as a FAANG engineer comes with designing platforms that withstand scale, failure, and rapid evolution. Demonstrating expertise in system design, security, and observability at scale is the mark of a truly senior data engineer.

Step 9: Impactful Projects and FAANG-Level Portfolio

FAANG hiring loops prioritize candidates who can point to production-grade systems and clearly articulate the architecture, trade-offs, and measurable business impact behind them, so your portfolio must showcase platform thinking, not just isolated scripts or notebooks.

What to demonstrate: Show end-to-end ownership across ingestion, storage, transformation, orchestration, observability, security, and cost controls, with design choices justified against scale, latency, and compliance constraints common at FAANG.

How to present: For each project, include a concise README, an architecture diagram, key SLAs/SLOs, data volumes processed, latency metrics, failure modes and mitigations, and a short Loom-style walkthrough so reviewers grok your system in minutes.

Recommended portfolio projects for experienced engineers targeting FAANG:

- Batch lakehouse pipeline: Ingest raw events to bronze, apply deduplication and quality checks to silver, and publish analytics/ML features to gold using Spark on Databricks or EMR, Delta Lake with time travel and schema evolution, and dbt for in-warehouse transformations, all orchestrated via Airflow with backfills and SLA alerts.

- Real-time streaming system: Build Kafka topics for clickstream or telemetry, process with Flink or Spark Structured Streaming using event-time windows, watermarking, and exactly-once semantics, then serve low-latency aggregates to a warehouse and a feature store for online inference, with end-to-end monitoring and replay strategies for late/out-of-order data, backpressure, and reprocessing scenarios.

- Domain data product: Model a domain (e.g., recommendations, ads, or payments) with star/snowflake schemas, SCD Type 2 dimensions, and contract-driven interfaces; publish discoverable, versioned tables with lineage in a catalog and explicit SLAs/SLOs to reflect data mesh “data-as-a-product” expectations at scale enterprises, including FAANG.

- Cost-aware cloud replatform: Migrate a legacy batch pipeline to a cloud-native ELT pattern on BigQuery or Snowflake, quantifying savings via storage tiering, partitioning/clustering, and right-sized compute, while adding CI/CD, IaC with Terraform, and policy-as-code for IAM and PII governance to meet enterprise compliance needs like GDPR/CCPA alignment.

Packaging strategy for maximum signal:

Curate three flagship repos only, each with a tight narrative: problem, constraints, architecture, trade-offs, metrics, and what you’d do differently if traffic or domains doubled, mirroring how FAANG design reviews probe decision quality under changing requirements and scale.

Add a top-level portfolio README that links to each project, includes architecture snapshots, a 90‑second video per project, and a capability matrix mapping to FAANG expectations across SQL, Spark/Flink, Kafka, dbt, Airflow, BigQuery/Snowflake/Redshift, IaC, and governance/observability to let reviewers “pattern match” quickly to their environment.

Outcome to aim for:

Your portfolio should read like evidence of platform ownership: fewer toy datasets, more production-minded designs with reliability, performance, privacy, and cost trade-offs explicitly handled, which is the exact bar that distinguishes senior FAANG data engineers from strong individual contributors in smaller environments.

How to Crack FAANG Data Engineer Interview

FAANG interviews measure depth across SQL, coding, distributed systems, cloud architecture, data modeling, and end-to-end pipeline design, with a strong emphasis on clarity of trade-offs, data quality, and operational maturity at scale.

Interview structure

Expect a recruiter screen, technical screens covering coding and SQL, a data-focused system design/ETL round, and behavioral interviews that probe ownership, cross-functional collaboration, and impact at scale, with variations by company and loop sequencing.

Companies like Meta often separate data engineering and software engineering loops, while Amazon integrates leadership principles throughout each round, so preparation should be tailored per company format and emphasis areas shared by the recruiter.

SQL and Coding Prep

Prepare for 1–3 SQL questions that escalate in difficulty, focusing on multi-join queries, window functions, CTEs, subqueries, NULL handling, and performance tuning on columnar warehouses like BigQuery, Redshift, or Snowflake, including reading query plans and reducing scans via partitioning/clustering.

Coding rounds emphasize Python for data manipulation and algorithmic fluency, with problems spanning arrays, hash maps, tree/graph traversals, and applied transformations, prioritizing clean code, clear invariants, and edge-case handling under time constraints.

Data Modeling and Warehouse Design

Be ready to translate ambiguous product analytics needs into a fact/dimension model using star or snowflake schemas, articulate SCD strategies (Type 1/2/3), and justify normalization vs denormalization for query performance and maintenance costs at petabyte scale.

Present how the model supports downstream analytics and ML, including aggregate tables, data contracts, lineage, and SLAs for freshness and correctness in a domain-oriented setup aligned with data mesh principles where applicable.

ETL/ELT Pipeline and System Design

In design rounds, decompose the problem, clarify requirements and SLAs, and propose a layered architecture: ingestion queues (Kafka/SQS), distributed compute (Spark/Flink), storage (data lakehouse with Delta/Iceberg/Parquet), warehouse serving (BigQuery/Redshift/Snowflake), and orchestration (Airflow/dbt), with explicit failure modes and recovery paths.

Discuss batch vs streaming trade-offs, late/out-of-order handling with watermarking, exactly-once semantics, schema evolution/versioning, partitioning/bucketing strategy, and observability (metrics, logs, lineage) to meet latency and reliability targets.

Big Data Frameworks and Streaming

Demonstrate working knowledge of Spark internals (lazy evaluation, DAGs, shuffle, skew mitigation, broadcast joins, caching) and when to prefer Hadoop MapReduce for massive disk-bound workloads or compliance-driven batch processing needs.

For streaming, show fluency with Kafka plus Flink/Spark Structured Streaming, covering event-time windows, state management, backpressure, and operational strategies for replay, reprocessing, and exactly-once delivery in high-throughput systems powering real-time product features.

Cloud Architecture and DevOps maturity

Deeply understand one cloud’s data stack end-to-end, including IAM/VPC, storage tiers, serverless compute, warehouse configuration, cost optimization, IaC with Terraform/CloudFormation, and CI/CD for pipelines and dbt models with test gates and policy-as-code for governance.

Be prepared to justify design choices with cost-performance trade-offs, multi-region replication strategies, disaster recovery objectives (RPO/RTO), and security controls for PII at column/row level with audited access paths.

Behavioral Excellence with Engineering Rigor

Prepare STAR narratives that highlight platform ownership: reducing cloud spend, improving SLA adherence, resolving data incidents, enabling ML use cases, and collaborating across product, infra, and privacy teams while navigating ambiguity and changing requirements.

For Amazon-style loops, map stories to leadership principles such as ownership, customer obsession, bias for action, dive deep, and insist on the highest standards, with measurable outcomes and lessons learned.

Role of AI in Data Engineering

AI is transforming data engineering from manual pipeline work to intelligent, assistive, and increasingly autonomous platforms that write code, optimize warehouses, and enforce governance at scale, raising the bar for FAANG teams in 2025.

Generative AI copilots accelerate SQL/Python authoring, suggest query and storage optimizations, and auto-document datasets, shifting engineers toward system design, reliability, privacy, and cost-performance trade-offs rather than repetitive tasks.

AI-driven observability detects anomalies and drift, proposes schema fixes, and tunes clusters and queries, while predictive maintenance reduces downtime by anticipating failures across complex DAG dependencies.

LLMOps and GenAI Pipelines

Building LLM features requires data engineers to manage RAG pipelines, prompt/versioning, inference telemetry, and vector databases, with strict SLAs on latency, cost, and safety, bridging data engineering, MLOps, and LLMOps.

Operational patterns emphasize lineage from events to embeddings to inference logs, rollback pathways, and human-feedback loops, enabling governed AI features at consumer scale.

Automation and Self-Optimization

AI agents increasingly convert ETL to ELT, right-size compute, and apply “carbon-aware” scheduling for cost and sustainability without missing SLAs, while seniors focus on contracts, privacy-by-design, and failure isolation.

AI is not replacing engineers. However, AI is multiplying output while demanding deeper judgment on governance, security, and user impact across batch, streaming, and AI-serving layers.

Skills to prioritize in 2025

- LLMOps fundamentals: prompt/version control, RAG pipelines, inference optimization (batching, streaming, caching), and real-time observability across latency, cost, and drift.

- AI-assisted development: leveraging copilots for SQL/Python generation, test scaffolding, and documentation, with human-in-the-loop review and guardrails for correctness and compliance.

- Data products for AI: vector databases, embedding refresh strategies, feature stores, and lineage that ties training data, prompts, and outputs to governance and audit requirements.

AI becomes the co-pilot for the FAANG data engineer, accelerating delivery while raising expectations around platform reliability, explainability, and cost efficiency across batch, streaming, and AI-serving layers.

Also Read: Use Cases of AI in Data Engineering

Conclusion

FAANG-ready data engineers prove platform ownership: reliable, secure, cost‑efficient data systems across batch, streaming, and AI-serving, not just tool familiarity. The strongest candidates pair advanced SQL, Python/Java, Spark/Flink, and warehouse depth with cloud-native design, robust orchestration (Airflow/dbt), governance, lineage, and observability tied to clear SLAs/SLOs and cost metrics.

Launch Your FAANG Data Engineering Journey

Elevate your data career with the Data Engineering Masterclass, where platform thinking meets FAANG expectations. Reserve your seat to learn how top engineers architect scalable, AI-powered systems using GenAI and modern lakehouse patterns, then see those ideas come alive through live problem solving on real interview questions and proven frameworks.

You’ll explore how LLMs, GenAI, and Agentic AI reshape pipelines, observability, and reliability so your designs meet strict SLAs, governance, and cost targets in production.

Guided by Samwel Emmanuel, Ex-Google and Salesforce, now at Databricks, you’ll gain practical strategies for cracking data engineering interviews and for building systems that stand up to internet-scale demands. This masterclass is backed by a trusted community of over 21K professionals, strong outcomes including a 66% average salary hike, and a 50% money‑back guarantee that underscores a focus on real results.

FAQs

1. What skills does this roadmap emphasize for FAANG readiness?

Advanced SQL, Spark or Flink, cloud warehouses, Airflow and dbt orchestration, governance and lineage, plus AI-aware pipeline design and optimization.

2. How does GenAI change data engineering in 2025?

GenAI accelerates code and documentation, optimizes workloads, and enables governed LLM pipelines, raising expectations for reliability, privacy, and cost efficiency.

3. What portfolio projects signal senior-level impact?

End-to-end lakehouse, real-time streaming with Kafka and Flink, domain data products with SCD Type 2, and cost-aware cloud ELT replatforming.

4. How should interview prep be structured for top data roles?

Drill SQL and Python, rehearse Spark internals, design batch and streaming systems, quantify SLAs and costs, and practice clear trade-off narratives.